More than ever before 3D models have become a "physical" part of our life, how we can see in the internet with 3D services of printing.

Some people have many difficult to get a model to print... well, not only to print, but to write an scientific article, make a job, or just have fun.

With this tutorial you'll learn how to scan 3D objects to use it the way you want.

Before all, I would like to thank all friends that help me to write this tutorial mainly Bob Max of the ExporttoCanoma's blog that publish interesting posts about GIS and now are interested in SfM (like all good nerd who works with 3D).

It's impossible to forget Pierre Moulon, the developer os Python Photogrammetry Toolbox (PPT), and Luca Bezzi e Alessandro Bezzi, developers of the ArcheOS and PPT GUI.

This tutorial includes many examples and some source files that will help you to learn how works the PPT.

So, lets go!

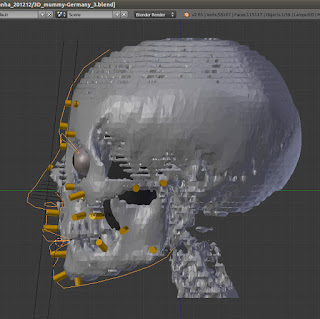

The image above show the object that we'll scan in this tutorial

How to make 3d scan with pictures and PPT GUI

First of all is necessary to download the Python Photogrammetry Toolkit on: http://www.arc-team.homelinux.com/arcteam/ppt.php

After download and unzip you have to edit the ppt_gui_start file putting the right path of the program (in orange).

Now, if you are in Linux is only run the script edited:

$ ./ppt_gui_start

Once the program is opened, click on “Check Camera Database”.

With the Terminal/Prompt by side, click in “Select Photos Path”.

Choose the path and then click on “Open”.

If all is OK, you’ll see a message in the Terminal:

Camera is already inserted into the database

If not, you can customise with this videotutorial:

Now, make a copy of the path.

1) Go to “Run Bundler”.

2) Past at “Select Photos Path”.

1) To make a good scan quality, click on “Scale Photos with a Scaling Factor”, by default, the value will be 1. If you have a computer with less power of processing, do not make this step (1), and go directly for the step bellow (2).

2) Click on “Run”.

Wait a few minutes, the program will solve the point clouds.

You will know that the solve is done when in the Terminal appear the message:

Finished! See the results in the '/tmp/DIRECTORY' directory

In this case the message was:

Finished! See the results in the '/tmp/osm-bundler-ibBZV9' directory

The Nautilus will be opened to, showing the directory with the files.

OBS.: If you area really curious, you can open the Bundle directory and see the .PLY files in Meshlab. But is better wait, because this point clouds is not good to make reconstruction/convertion into a mesh.

1) Go to the “or run PMVS without CMVS”

2) Click in “Use directly PMVS2 (without CMVS)”

2) Click on “Run

When the process is done, you’ll see a new directory named “pmvs” appear.

So, you have to enter in “models” and search for a file named “pmvs_options.txt.ply”. If all is OK it is the final process of solving.

OBS.: It’s a good idea copy the osm-* directory for your home, because it will be lost in the next boot, because the /tmp directory.

When you open the “pmvs_options.txt.ply” file in Meshlab you’ll see that the points cloud is really dense now, with almost the quality of a picture.

Only appear a picture or a mesh... notice that the “Points” is a way of view selected.

If you select “Flat Lines” for an exemple, the points clouds will desappear... because, obviously... it’s a --points-- cloud.

Click again in “Points” to see the points cloud and:

1) Click on “Show Layer Dialog” (A)

2) So, will appear a new element in the interface with the name of the object, in this case “pmvs_options.txt.ply” (B)

Go to “Filters” -> “Remeshing, simplification and reconstruction” -> “Surface Reconstruction: Poisson”

A new window will appear with the defaults value of “Octree Depth” and “Solver Divide”

1) Change the values to:

Octree Depth: 11

Solver Divide: 9

2) Click in “Apply”

OBS: This vlues can crash the program if you computer do not have a good power of processing.

If all runs OK, you will notice two things:

1) A lot of new write points over the reconstruction.

2) A new layer in the upper right named “1 Poisson mesh *”

But, when we comeback to “Flat Line” to see the mesh, strange things can happen. In this case, the algotithm Poison created one type of ball to reconstruct the mesh.

We can see it better when we orbit away the model.

So, to make the door visible, we:

1) Come back to the “Points” view (A)

2) Orbit the scene to see the side of the door.

3) Click on “Select faces in a rectangular region”

So:

1) We make a window selection on the region that will be deleted (1A-2A)

2) Click on “Delete the current set of selected faces”.

Now we can see the mesh in the correct side.

But, when we change the type of view to “Smooth”, we see the mesh write without the colors of the points cloud.

To paint the mesh with the color of the points cloud we can go to:

Filters -> Sampling -> Vertex Attribute Transfer

A substancial part of this step was learned with this video: http://vimeo.com/14783202

A new window will appear.

You’ll have to invert the objects, because the “pmvs_options.txt.ply” is the real source mesh, that will be the base to paint, and the “Poisson mesh” will receibe the colors, so it is the Target mesh.

When you click on “Apply” immediatly you’ll see the mesh colored, like the image above.

If you wanna send this mesh to other software like Blender, you can go to:

File -> Export Mesh As..

Choose a place to save the .PLY file.

If all is OK, the mesh will be imported on Blender (or other software) perfectly.

Other examples:

If you wanna you can download a sequence of pictures of Taung Child (anim. above) to make your own test here:

And see if match with the final result here:

I hope it has useful to you.

A big hug and I see you in the next article!